See which workloads in your cluster are talking to AI providers.

No code changes. No Billing API integration. No Credentials. 2 mins to install.

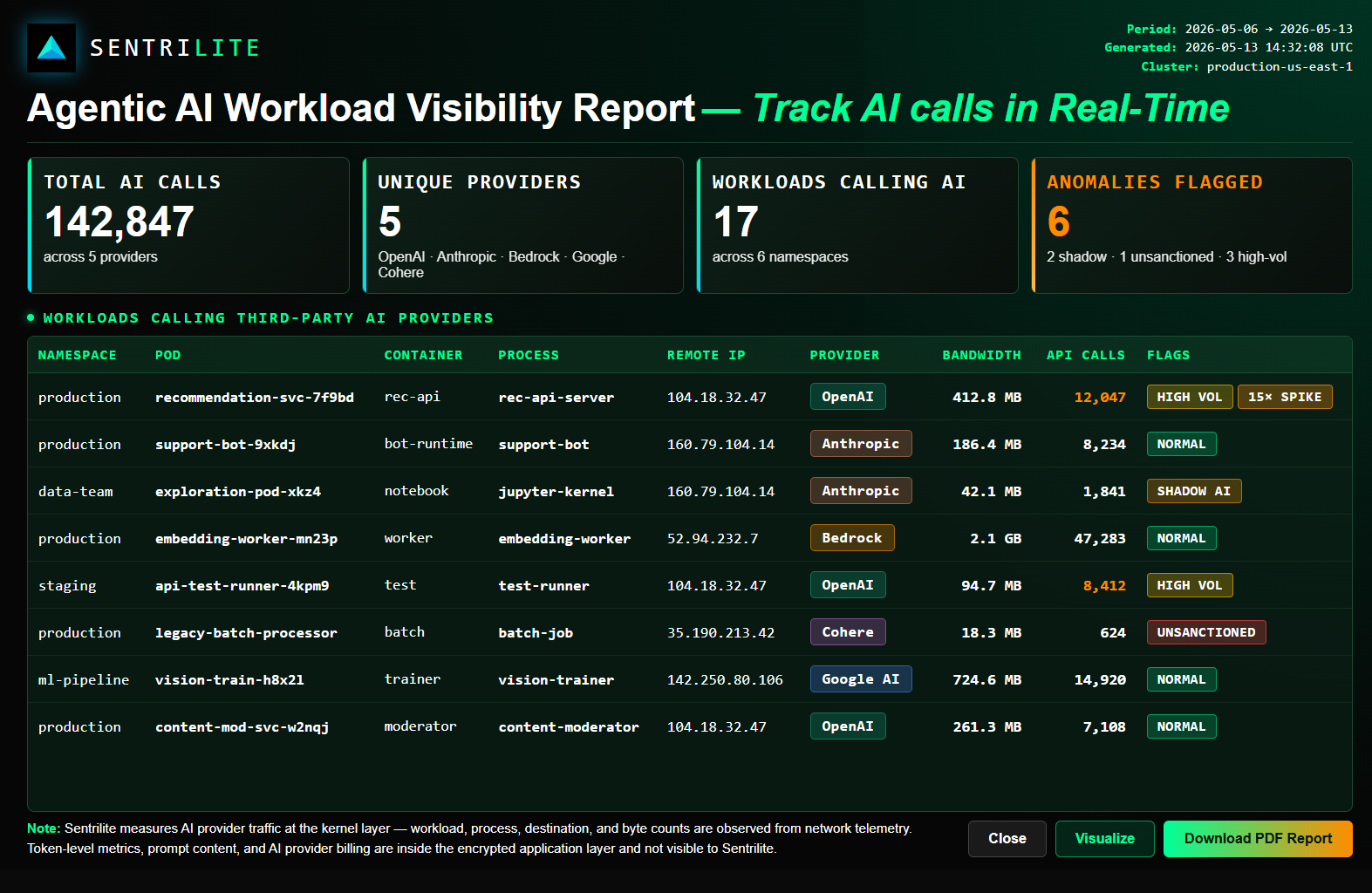

Sentrilite detects every outbound call from your environment to OpenAI, Anthropic, Bedrock, Google AI, and other AI services — at the kernel level.

- Detect when app X makes 1,000 calls to api.openai.com in an hour — and alert before it becomes a quota or budget surprise

- Get real-time bytes and call counts per workload, per AI provider — OpenAI, Anthropic, Bedrock, Google AI, and more

- Catch shadow AI: workloads calling AI providers that nobody on the FinOps or governance team knew about

- Surface policy violations: workloads calling unsanctioned AI providers outside your approved list

- Find dev or staging environments hammering production AI endpoints with the wrong credentials

What Sentrilite Surfaces

Workload-level network behavior for every AI call in your environment — visible without instrumentation, without API keys, without changing a single line of application code.

Workload → AI Provider Mapping

See exactly which Kubernetes pod, container, or process is calling OpenAI, Anthropic, Bedrock, Google AI, Cohere, Mistral, and others. Attribution down to the process and destination level.

Call Volume and Frequency

Calls per hour, calls per day, bytes sent and received per workload per AI provider. Build a baseline of normal AI usage and detect when it changes.

Shadow AI Detection

Find AI calls happening in your environment that nobody knew about. Workloads bypassing your AI gateway, unauthorized API keys, dev environments hitting production AI providers.

Real-Time Alerts

Alert when a new workload starts calling AI providers. Alert when call volume exceeds a threshold. Alert when AI calls cross regions or use unsanctioned credentials.

No Instrumentation, Ever

Works at the kernel level via eBPF. No SDK to install. No proxy to route through. No code changes. The agent runs as a single DaemonSet and sees every outbound connection in your cluster.

Why Shadow AI Matters

Most enterprises have zero visibility into where AI calls originate in their fleet. Developers experiment, prototypes ship to production, AI usage spreads through teams — and the security, compliance, and FinOps teams find out months later.

Shadow AI is the next shadow IT problem

Three years ago, the question was "which SaaS tools are our engineers using without IT knowing?" Today, the question is "which AI providers are our workloads calling without anyone tracking it?"

SDK-based AI observability tools (Helicone, Langfuse, LangSmith, OpenLLMetry) only see what's instrumented to go through them. They miss:

- Developers calling OpenAI directly with a personal API key

- Forked services that don't use the company's AI gateway

- Internal scripts and cron jobs making AI calls outside any monitoring

- Production workloads still on legacy AI integrations from before the gateway existed

- Third-party libraries that quietly call AI providers behind the scenes

Sentrilite sees all of it because it watches the network, not the SDK. Every outbound TCP connection from every workload, regardless of how the application was written.

Use Cases

Real patterns Sentrilite surfaces from kernel-level network observation. Each scenario below is the kind of finding you'd see in a typical Sentrilite report.

A workload's AI usage suddenly spikes. Could be a feature launch, could be a runaway loop, could be a credential being misused. Sentrilite catches it in minutes — before quota limits hit or the next billing cycle closes.

Live bytes flowing to every AI provider, broken down by workload. Track usage as it happens — call counts, byte volumes, source pods — instead of waiting for a daily report or a vendor dashboard.

Someone on the data team started experimenting with Claude. Nobody told the platform team. Nobody told security. Nobody told the AI governance committee. Sentrilite flags it immediately so the right conversations happen.

Your AI governance policy approves OpenAI and Bedrock. A workload is calling Cohere anyway. Sentrilite catches policy violations the moment they happen, not in the next compliance audit.

A staging environment is hammering the production OpenAI endpoint because someone forgot to switch to a test key. Sentrilite surfaces it the same day, before the dev team's monthly quota is gone.

What Sentrilite Does Not Do

Sentrilite does not provide real-time token usage of AI API calls. We surface workload-level network activity — which workloads are calling which AI providers, at what frequency and byte volume. Token-level metrics live inside the encrypted application layer.

Find out where AI calls are happening in your cluster

Tell us a bit about your environment. We'll reach out within one business day to discuss AI Workload Visibility for your cluster.